What NASA mission control can teach us about team communication

By Jamie Thompson, May 1, 2026 Last updated May 2, 2026

Last year I had the opportunity to visit the Johnson Space Center in Houston, Texas. It's an amazing place, charting the history of NASA and spaceflight from the Mercury programme right up until today. There is no shortage of incredible things to see: a colossal Saturn V rocket whose scale is impossible to understand until you are standing beside its engines, a 747 which once carried the Space Shuttle Orbiter, and artefacts spanning the entire history of human spaceflight. But the part I found most interesting was visiting the Apollo mission control room, restored to exactly as it was during the Apollo 11 landing. During the visit they ran a replay of the Moon landing, with the screens flickering to life showing data as it would have appeared in July 1969, the audio filling the room as you follow the landing sequence through to its conclusion: "Houston, Tranquility Base here. The Eagle has landed."

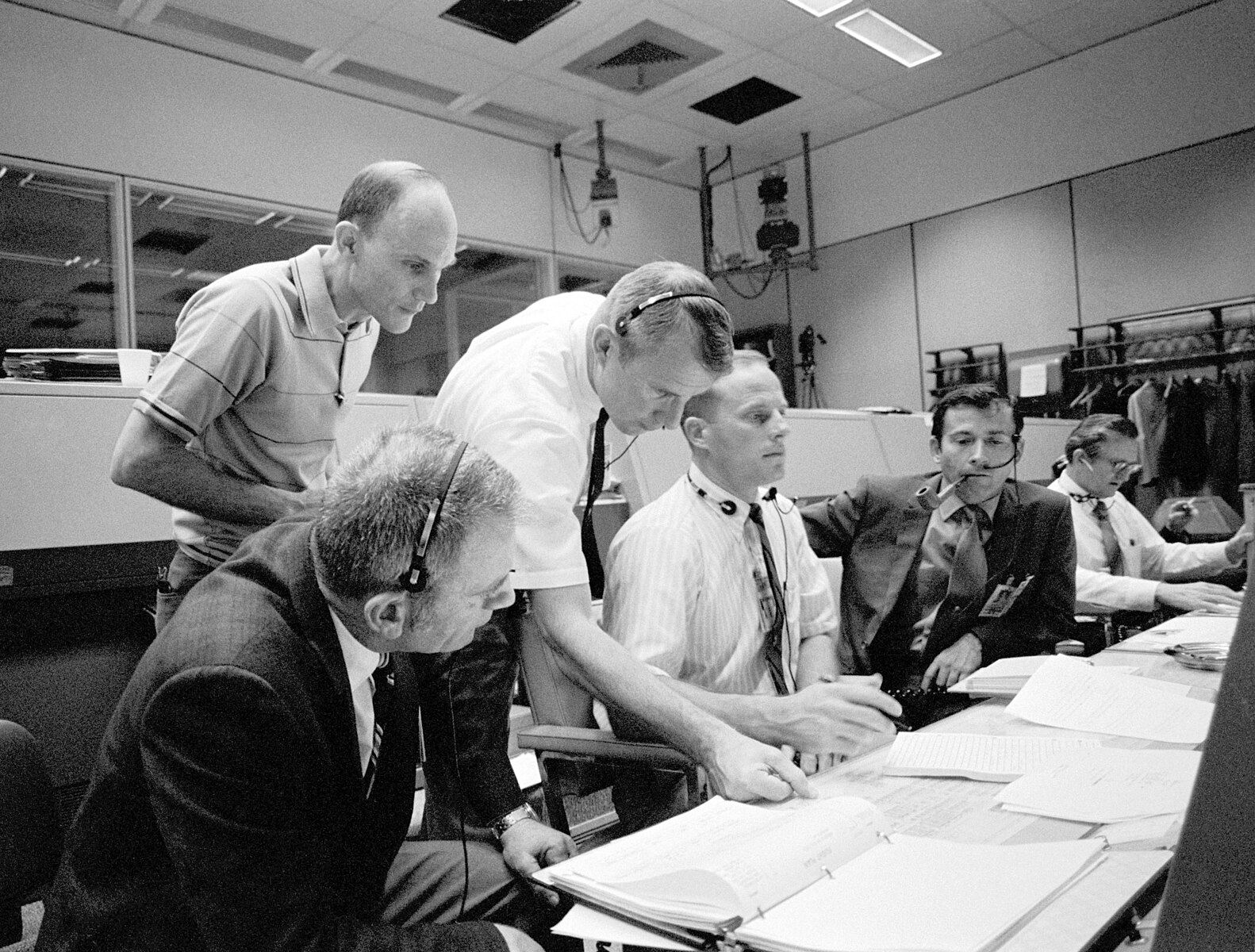

I love technology, and seeing those old terminals come to life was genuinely exciting. But what really struck me wasn't the technology at all. It was how everyone communicated: complex information, shared quickly, simultaneously, and precisely, under the most extreme pressure imaginable.

That team communication culture was carefully built by NASA through years of iteration, training, and rehearsal. The principles behind it are just as relevant today as they were in 1969, and we can apply them directly to our own teams.

Most people don't have to deal with the pressure of landing on the Moon, but that doesn't mean the stakes are low. Every day, teams in businesses and organisations face decisions under time pressure, with real consequences for getting them wrong. The cost of poor communication in these situations is real: research from Grammarly found that business leaders estimate their teams lose the equivalent of nearly a full working day every week to poor communication alone.

Many businesses leave communication to chance. They hire for skills and experience, set goals, and assume that information will flow where it needs to go. NASA took a very different approach, and it is one we can learn from.

"Work the problem": facts, not assumptions

Two days into the Apollo 13 mission, an oxygen tank exploded. With the crew's lives on the line, mission control, the support teams in the back rooms at NASA, and the astronauts themselves had to work together under extreme pressure: diagnosing what had happened, understanding what they were dealing with, and iterating towards a solution that would bring the crew home safely.

The phrase "work the problem" may have been polished by Hollywood, but it reflects something real about mission control culture. When things go wrong, flight controllers and astronauts are trained to state only what they know, to label assumptions as assumptions, and to keep status updates calm and precise. No conflating fact with fear. No jumping to solutions before the problem is understood.

The parallel in business is easy to find. Under pressure, teams routinely mix what they know with what they suspect, or what they are afraid of. Meetings spiral into solution mode before anyone has clearly defined the problem. Status updates become long narratives that bury the key information.

Separating facts from assumptions is a simple discipline, but it requires deliberate practice.

Practical takeaway:

- Before jumping to solutions, ask explicitly: what do we actually know, and what are we assuming?

- Keep status updates brief and structured: what has happened, what we know, what we are doing next

- If you are making an assumption, name it as one

Redundancy in information, not decision-making

In mission control, every controller is looped into a network of communications. They can hear what is happening across systems beyond their own. Behind them, rooms of support specialists listen, analyse, and feed information forward. The more people who have the full picture, the less likely something critical gets missed.

Authority, however, does not follow the same logic. Information and recommendations flow up the chain, the flight director listens and questions, but ultimately the flight director decides alone.

Information is wide. Authority is narrow.

Many organisations get this backwards. The right people don’t have the full picture because information is siloed. Meanwhile, it can be unclear who has the authority to make decisions. With this ambiguity, the person who knows something critical may have no clear route to get it to where it needs to be, and things can grind to a halt.

Practical takeaway:

- Identify your flight director. For every significant decision, it should be clear who has the authority to make it before the discussion starts, not after.

- Give people permission to speak across boundaries. Encourage people to flag problems outside of their immediate area. In mission control, a controller who spots something in another system is expected to say so.

- Don't create single points of failure. Critical information should never sit with just one person. If only one person knows something important, that is a risk. Key information should be documented and shared before high-stakes moments, not during them.

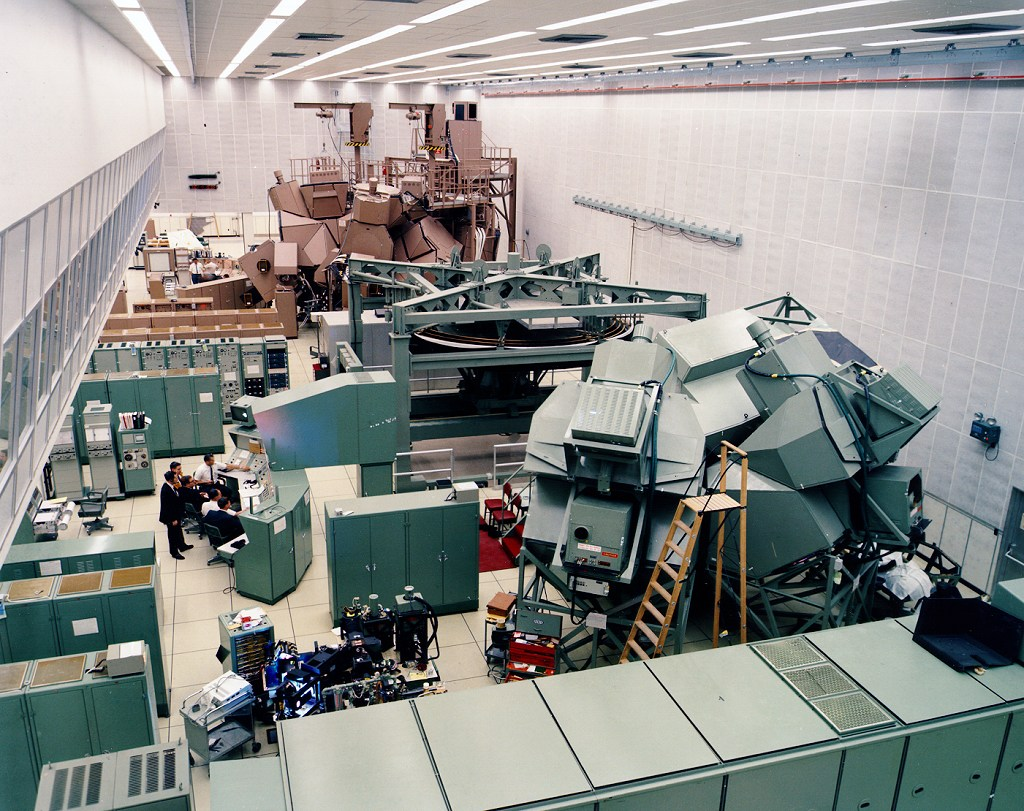

Simulation culture builds confidence

Thirty-six seconds after lift-off, Apollo 12 was struck by lightning. Sixteen seconds later, it was struck again. Mission control's telemetry stream became garbled, while inside the capsule the astronauts' dashboards lit up with red warning lights. Initially, nobody knew what had happened.

John Aaron, the Electrical, Environmental and Consumables Manager in mission control, called out calmly: ‘Flight, try SCE to Aux.’ He had recognised this failure pattern from a previous simulation. It was an obscure command: neither the flight director nor CAPCOM knew what it meant. But astronaut Alan Bean knew exactly where the switch was. He had spent countless hours in the simulator and knew every aspect of the spacecraft's controls. He flipped it. Telemetry was restored, and with no significant malfunctions confirmed, Apollo 12 proceeded to become the second mission to land on the Moon.

Aaron's quick thinking earned him the legendary NASA title of "steely-eyed missile man." But the lesson here isn't about one person saving the day. This exact scenario had never been covered in training. What the simulations had built was something harder to measure: deep familiarity, pattern recognition, and the composure to act quickly when facing something entirely new.

This is a clear example of when simulation saved lives.

Most organisations only find out how their teams perform when they are tested for real. A product launch that goes wrong, a client crisis, an unexpected operational failure. These are not the moments to find out.

Practical takeaway:

- Rehearse before it matters. Scenario planning, tabletop exercises, and simulations give teams the opportunity to build familiarity and surface problems in a safe environment.

- Safe failure is valuable. Getting something wrong in a low-stakes environment is an opportunity, not a problem. Teams that have practised being wrong are better equipped to respond when it matters.

- Treat preparation as an investment, not an overhead. Time spent rehearsing and scenario planning pays dividends when the real test arrives.

Things to watch out for

NASA's communication principles are simple to describe, but often difficult to implement. A few common pitfalls are worth naming.

Separating facts from assumptions requires psychological safety. If people fear being wrong, they will present assumptions as facts rather than risk looking uncertain. The discipline only works if the culture supports it.

Information sharing can become noise. The goal of wide information flow is clarity, not volume. Teams that overcorrect can end up overwhelming decision-makers with data rather than insight. The mission control model works because every controller filters and distils before feeding information forward.

Simulation only works if people care about it. Exercises that feel low-stakes will produce low-stakes behaviour. If the experience isn't engaging enough to hold attention and create investment in the outcome, the habits you are hoping to build simply won't form.

Wrapping up

NASA didn't land on the Moon by accident. The communication culture in that mission control room was the result of years of deliberate design, relentless practice, and a commitment to learning from every simulation, every debrief, and every failure.

Most of us are not trying to land on the Moon. But the principles translate directly.

To recap the three ideas we have explored:

- State facts as facts and assumptions as assumptions; clarity under pressure starts with intellectual honesty

- Make information wide and authority narrow; everyone should have the picture, but someone must own the call

- Build familiarity before you need it; simulation and rehearsal create the confidence to handle what you have not anticipated

Further reading

The BBC’s 13 minutes to the Moon is a great podcast with audio and interviews from flight controllers and astronauts, covering Apollo 11, all the way up to Artemis II.

We’re currently building a library of micro simulations which offer you the opportunity to use simulation in just two to five minutes. Check out our micro simulations here.

References

Images courtesy of NASA

Grammarly & Harris Poll (2022). The State of Business Communication.

https://www.grammarly.com/business/Grammarly_The_State_Of_Business_Communication.pdf